- The Shift

- Posts

- Microsoft unveils its first in-house AI models

Microsoft unveils its first in-house AI models

Plus, 🎯 How to Analyze Video Ads in Seconds Using Gemini, OpenAI’s GPT-Realtime and Realtime API Live now, and more!

Hello Readers👀

Curious about the biggest AI moves today? You’re in the right place. In today’s edition, we have:

🤖 Microsoft Debuts Its First In-House AI Models

🎯 How to Analyze Video Ads in Seconds Using Gemini

🎙️ OpenAI’s GPT-Realtime and Realtime API Go Live

🔨Tools and Shifts you cannot miss

🤖 Microsoft Debuts Its First In-House AI Models

Microsoft has unveiled two new in-house models, MAI-Voice-1 and MAI-1-preview, signaling a shift away from heavy reliance on OpenAI and toward building its own foundation.

The Shift:

1. MAI-Voice-1: Ultra-Fast Speech AI - MAI-Voice-1 can generate a full minute of expressive speech in under a second on a single GPU, making it one of the fastest speech systems available. It’s already powering Copilot Daily and Podcasts, with storytelling demos and meditations now available in Copilot Labs.

2. MAI-1-preview: Instruction-Focused Text Model - Trained on 15,000 NVIDIA H100 GPUs, MAI-1-preview is Microsoft’s first full foundation model, designed for instruction-following and everyday queries.

3. Strategic Shift in AI Independence - CEO Mustafa Suleyman says MAI-1 is “up there with some of the best models in the world,” though benchmarks are still pending. The text model is currently being tested on LM Arena and via API, with Microsoft planning to expand into “certain text use cases” inside Copilot in the coming weeks.

By launching its own models, Microsoft gains flexibility in shaping Copilot while reducing risk tied to its OpenAI partnership. Voice AI brings new creative and consumer-facing applications, while the MAI-1 text model lays the foundation for broader enterprise and everyday use.

🎯 How to Analyze Video Ads in Seconds Using Gemini

Watching and breaking down ads manually is a massive time sink for strategists. With Gemini, you can automate structure breakdowns, emotional arcs, and creative insights, all by pasting a single prompt.

Step 1: Go to gemini.google.com and log in with your Google account

Step 2: Upload your video ad. You can either paste a YouTube link or upload a short video file directly (MP4, WebM, etc.)

Step 3: Paste the following Prompt

You are a top-tier DTC creative strategist. Watch this video ad and break it down like a pro. Give me a clear, timestamped analysis including:

Hook breakdown (0–3s): What attention trigger is used?

Emotional arc (3–15s): How is desire or tension built?

Product pitch (15–30s): What’s being sold, and how clearly?

CTA clarity (final 5s): is it direct, urgent, or frictionless?

Then add:

Frameworks used (e.g. PAS, BAB, demo-to-CTA)

Visual/audio triggers are likely driving performance

2 improved variants you’d test next

Step 4: Gemini will return a full breakdown in seconds

You can use it to build a variant brief for your next ad, spotting weak spots in storytelling or CTA, or creating a creative testing roadmap. This method turns Gemini into your personal ad strategist, saving hours of manual breakdowns and helping you scale variant production smarter.

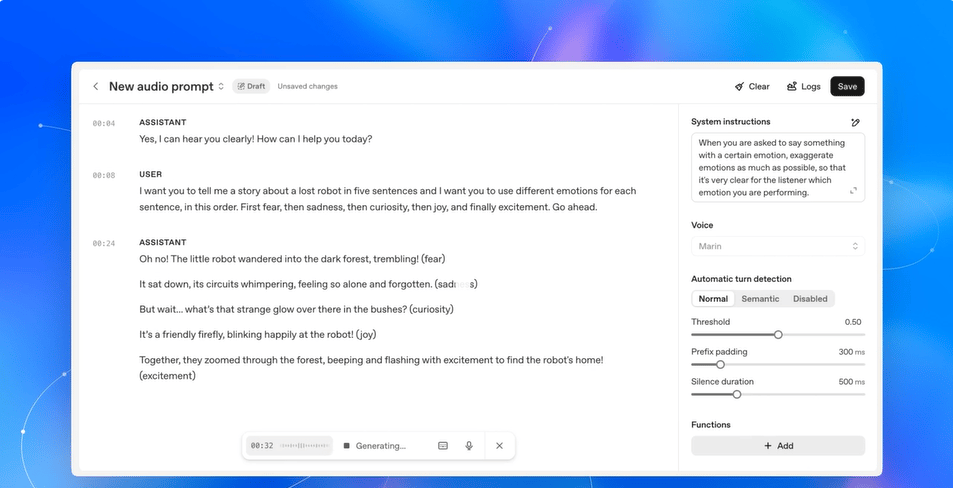

🎙️ OpenAI’s GPT-Realtime and Realtime API Go Live

OpenAI just took its Realtime API out of beta and introduced GPT-Realtime, its most advanced speech-to-speech model yet. The update brings human-like voice, tool integration, and real-world production features that finally make voice agents viable for businesses at scale.

The Shift:

1. Human-Level Voice AI - GPT-Realtime produces speech that laughs, sighs, switches languages mid-sentence, and follows nuanced prompts like “speak empathetically in a French accent.” The new voices, Cedar and Marin, plus upgrades to the existing eight, make conversations sound natural enough for customer support or education.

2. Real-World Integrations - The API now supports Session Initiation Protocol (SIP), meaning GPT-Realtime can pick up and handle actual phone calls. It also supports remote MCP servers for direct tool integration and image inputs so users can upload screenshots mid-call for live interpretation.

3. Faster, Cheaper, Production-Ready - By collapsing speech-to-text and text-to-speech into a single pipeline, GPT-Realtime reduces latency and keeps conversational nuance intact. Pricing is now 20% cheaper than before: $32 per million input tokens and $64 per million output tokens.

Voice AI has long been clunky, stitched together, and too robotic for production use. GPT-Realtime flips that script by offering human-like dialogue, tool connectivity, and phone integration in one package, removing friction for developers and enterprises.

🔨AI Tools for the Shift

📊 Clamor – AI-powered social media analysis for smarter decisions. Understand audiences and optimize engagement.

⚡ WeWeb – Generate web apps in minutes with AI. No coding, just creation.

🎵 GSong.ai – Create professional-quality songs from text in seconds. Music production made simple.

📝 PromptBuilder.cc – Turn simple ideas into professional AI prompts instantly. Boost output with smarter prompts.

🎬 Veespark AI Video Generator – Convert scripts, images, and ideas into videos. Fast AI-powered creation for content teams.

🚀Quick Shifts

📄 Anthropic will begin training Claude AI on user chat transcripts and coding sessions starting September 28, 2025, unless users opt out, while extending data retention for non-opted-out users to five years.

🤖 xAI unveiled Grok Code Fast 1, a fast and affordable agentic AI coding model (formerly codenamed sonic), optimized for languages like Python and Go, and free for limited-time launch partners.

🧮 MathGPT.ai, a “cheat-proof” AI tutor using Socratic questioning, expands from 30 to 50+ institutions, giving professors control over use, adding LMS integrations, accessibility features, and stricter anti-cheating safeguards.

🎥 Krea launched a waitlist for its new Realtime Video feature, letting users create and edit video through canvas painting, text prompts, or live webcam feeds while maintaining strong visual consistency.

💻 OpenAI has enhanced Codex with new features: IDE extensions (VS Code, Cursor), a GPT‑5–powered CLI agent, and automated GitHub pull‑request code reviews for seamless, agentic developer workflows.

That’s all for today’s edition. See you tomorrow as we track down and get you all that matters in the daily AI Shift!

If you loved this edition, let us know how much:

How good and useful was today's edition |

Forward it to your pal to give them a daily dose of the shift so they can 👇

Reply